Senior Data Engineer - Snowflake Platform

10,000 jobs found — updated daily

Responsible for creating, transforming and expanding the data pipeline to various target destinations for consumption across the Credit Union data architecture. This role will be responsible for the development, maintenance, testing and support for these solutions. The Senior Data Engineer will work to create and maintain optimal data pipeline architecture, assemble large, complex data sets that meet functional / non-functional business requirements, and identify, design, and implement internal process improvements such as automating manual processes, optimizing data delivery, and re-designing infrastructure for greater scalability. They will build the infrastructure required for optimal ETL/ELT of data from a wide variety of data sources using SQL and cloud 'big data' technologies, and manage the Snowflake environment including administration, data ingestion, and security. The role involves collaboration with stakeholders including the Executive, Product, Data, and Design teams to assist with data-related technical issues and support their data infrastructure needs, while ensuring SFFCU and Member data security and compliance with NCUA and regulatory policies. Additionally, the engineer will create data tools for analytics and data scientist team members to enhance analytic capabilities and strive for greater functionality in data ecosystems.

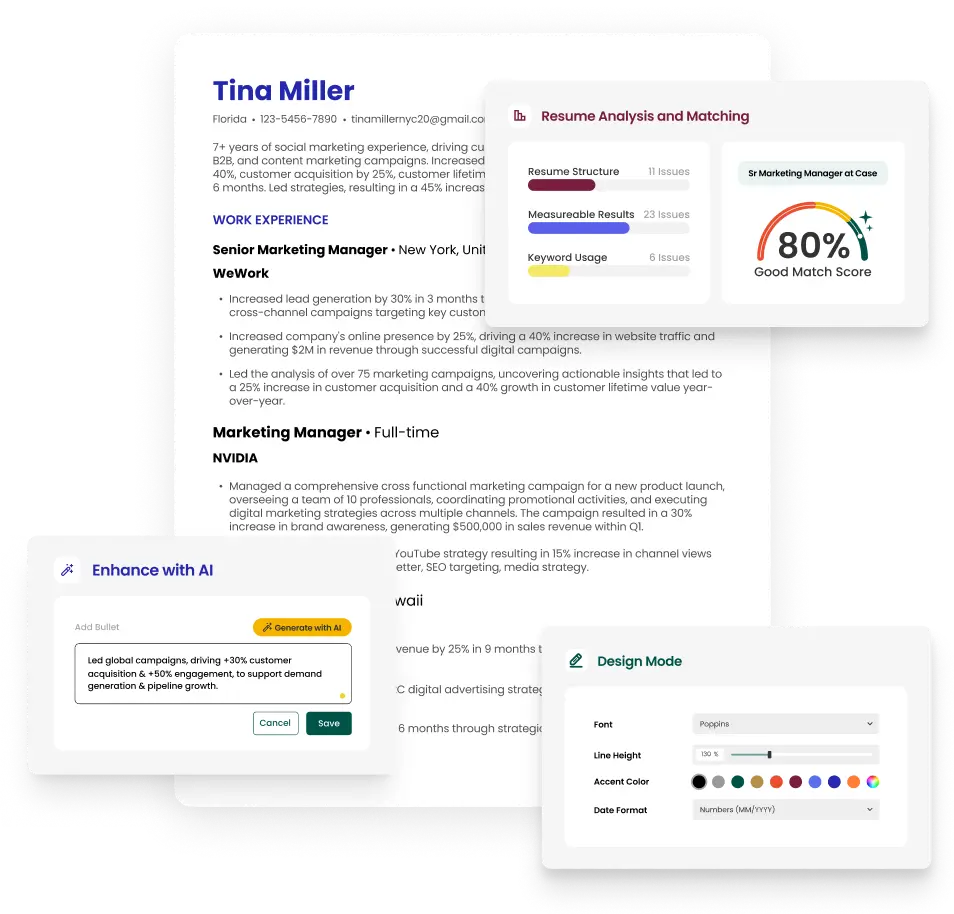

Stand Out From the Crowd

Upload your resume and get instant feedback on how well it matches this job.

Job Type

Full-time

Career Level

Senior

Number of Employees

1-10 employees

The resume builder that gets results.