Senior ML Engineer - Model Compression

790 jobs found — updated daily

The Compression and Parity team in GM’s Autonomous Vehicle (AV) Organization enables repeatable, high-velocity model deployments through principled and automated model compression under strict safety guarantees. We partner closely with model developers and deployment and infra engineers to ship numerically robust, low-latency models to the car, blending rigorous analysis with state-of-the-art methods and our own innovations. Over time, you will help grow and evolve the Compression and Parity function through developing and iterating on quantization and compression strategies for AV models, considering model numerical properties, safety and latency constraints, and hardware performance. You will partner on the deployment of quantized models to NVIDIA-based AV hardware with deployment, compiler, and kernel teams. You will advance numerical sensitivity analyses to recommend safe compression policies per op/layer/block, use AV-relevant metrics to evaluate compressed models, and collaborate with Embodied AI to support compression-aware modeling. You will evolve sensitivity analysis, compression, and parity tooling into a connected, automated flow that makes low-precision deployments repeatable, reliable, and low-touch, with an emphasis on robust execution and maintainability. You will bridge the gap between state-of-the-art model compression research and safety-constrained deployment while making strong technical contributions in cross-functional projects and educating others on best practices.

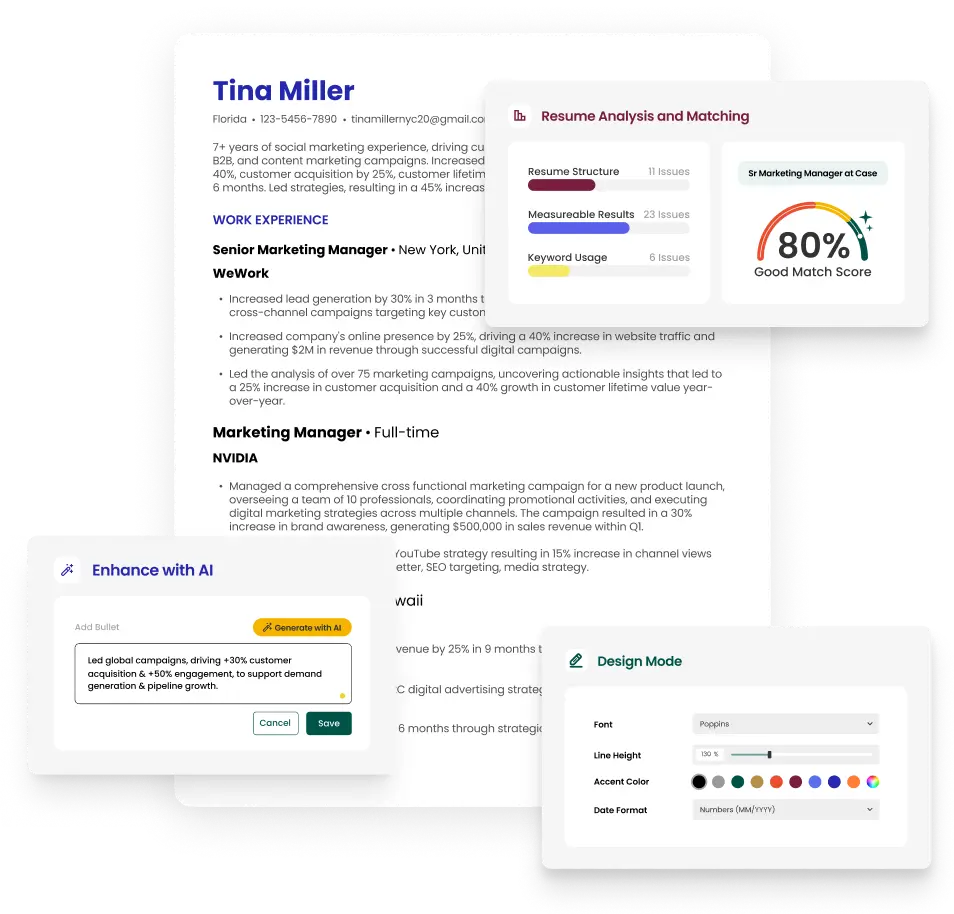

Stand Out From the Crowd

Upload your resume and get instant feedback on how well it matches this job.

Job Type

Full-time

Career Level

Senior

The resume builder that gets results.